You’ve mastered prompt writing. Maybe you even use a few frameworks. But the AI’s output is still generic, inconsistent, and not good enough for real business. The results feel random. Scaling any AI initiative is a total crapshoot.

Let’s be clear: this isn’t your fault. The problem isn’t your prompt.

Your AI Prompts Are Failing And You Don’t Know Why

As a Fractional Chief AI Officer, I see this same frustration every single day. In boardrooms and on Slack. You and I know that basic prompting gets you about 20% of the way there. A decent start. But just the start.

You’re hitting a wall because you’re giving the AI simple commands. What it really needs is a sophisticated, structured briefing.

The truth is, the AI doesn’t have your company’s unique perspective. Your customer data. Your strategic goals. Asking it to write a high-converting ad without that information is like asking a new hire to lead a client meeting on their first day. No prep. Destined to fail.

The missing ingredient is high-quality context.

Moving Beyond Amateur Hour

This is where professionals separate from amateurs. While your competitors are still trying to find the “magic words” for the perfect prompt, you can build a system that feeds the AI everything it needs to perform at an elite level.

This is the shift from just prompting to the professional-grade discipline of context engineering.

To truly understand why your AI prompts are failing and craft more effective interactions, gaining a deeper insight into the fundamentals is crucial. This article provides A Clear Guide to AI Prompts that can help lay the groundwork for more advanced techniques. Moving beyond the basics is essential for real results.

You can’t build a scalable, revenue-generating AI function on a foundation of hope and clever phrasing. You need an engineering approach.

Context engineering is about building the entire information ecosystem an AI uses to generate a response. It’s designing the briefing, not just writing the request.

This isn’t about getting slightly better answers from a chatbot. It’s about building a permanent, decisive competitive advantage. It’s how you create a “bionic” marketing system your rivals can’t replicate. Their AI is stuck on beginner mode, spitting out the same generic content as everyone else.

This is the key to unlocking real business growth with AI. How you go from playing in the sandbox to dominating your market.

Forget tinkering with one-off prompts. It’s time to start building systems. Time to become a context engineer.

What Is Context Engineering And Why It Matters

Let’s get one thing straight. Most people believe getting good at AI means finding the “magic words” for a prompt. They’re wrong. That’s why their results are inconsistent and you can’t build a reliable business on them. The real shift happens when you move to a discipline I call context engineering.

I define context engineering as the art and science of designing the entire information ecosystem an AI model uses to generate a response. It’s not about the single prompt you write; it’s about the complete system surrounding it.

This system includes the specific data we pull in real-time. The memory of past conversations we give the model. And the multi-step workflows we build around it. It’s the difference between asking a total stranger for directions versus handing a master navigator a detailed map, a compass, and your exact destination.

From Simple Questions to Sophisticated Briefings

Think of it like this. You’re hiring a brilliant consultant who has complete amnesia. A simple prompt is giving this consultant a one-sentence task with zero background. You’ll get a generic, uninspired answer because they have nothing to work with.

Context engineering is the exact opposite. It’s handing that same consultant a perfectly organized briefing document, a folder of relevant past projects, and giving them access to a specific, curated internal database. The quality of their work will be worlds apart.

You’re no longer just asking a question. You’re providing a universe of relevant information so the AI can reason and create from your unique business reality. This is how you get outputs that sound like your brand, reflect your data, and actually drive your revenue goals.

Context engineering transforms the AI from a clever parrot into a trusted, high-performing team member. You stop getting generic answers and start getting strategic results that directly impact your bottom line.

This discipline became critical as large language models evolved. When models with bigger “context windows” came out, everyone thought we could just cram more and more data into the prompt. That was a disaster.

It led to a problem we call “context rot.” The model’s performance actually gets worse as you feed it more information. Like a person in a meeting with too many people talking at once, the AI loses focus. Its reasoning degrades. The output quality nosedives. A real engineering challenge.

The “aha” moment for every serious AI practitioner is realizing that structured, relevant context beats massive, unstructured context every single time. This is what separates amateurs from professionals who build scalable, revenue-generating AI systems. For a deeper dive on how these two disciplines differ, you can read my comparison of context engineering vs. prompt engineering.

Why This Is Your New Competitive Edge

Mastering this isn’t some academic exercise. It’s a direct path to dominating your market. As I’ve worked with AI since 2019, context engineering quickly moved from a basic technique to a sophisticated discipline.

The market has proven this out, emphatically. The global LLM market, which depends on these advanced techniques, is projected to explode from $4.5 billion in 2023 to $82.1 billion by 2033. This isn’t hype. It’s a massive economic shift you can either ride or be crushed by.

Alright, let’s get out of the clouds of theory and into the workshop. It’s one thing to know what context engineering is. It’s another thing entirely to know what a context engineer actually builds. This isn’t about abstract concepts—it’s about the specific toolkit you and I can use to build AI systems that give a business a real, defensible edge.

The work boils down to four core techniques.

Master these, and you’ll stop tinkering with one-off prompts for generic blog posts. You’ll start building an engine that creates tangible market advantages.

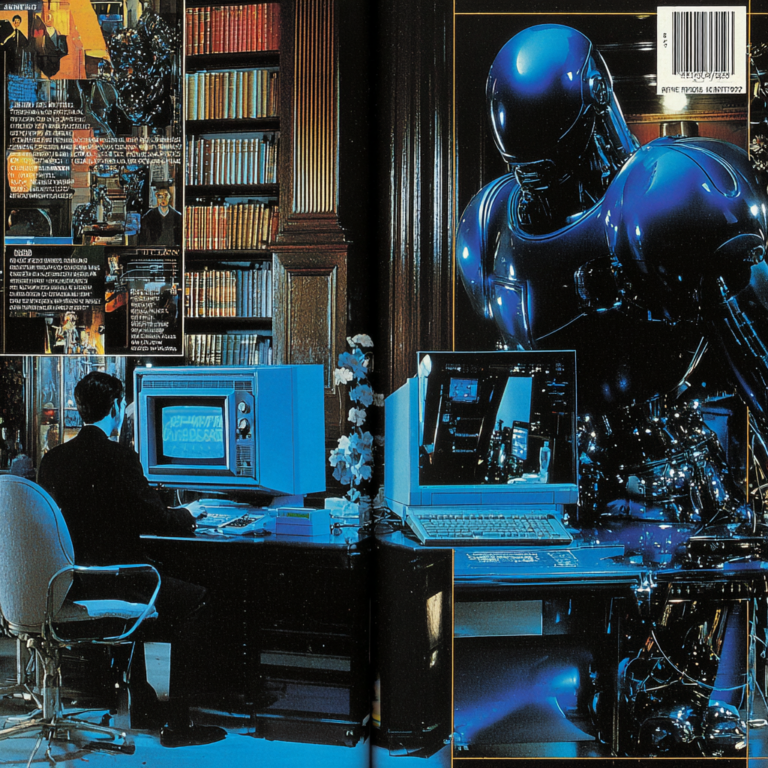

This diagram shows how the moving parts—data, memory, and workflows—fit together to give the AI the exact briefing it needs to do valuable work.

The big idea here is that you’re building a dynamic system, not just writing static prompts. Each piece informs the others, ensuring the AI’s “briefing” is always current, relevant, and perfectly structured for the job at hand.

1. Advanced Prompt Structuring

This is the bedrock. Prompt structuring is where you stop writing messy paragraphs and start creating machine-readable briefing documents. Instead of throwing a wall of natural language at the AI, you use structured formats like XML or JSON to organize your instructions.

Why bother? Because structure crushes ambiguity. It forces the model to treat different pieces of your context with the exact weight and purpose you intend.

You create explicit, clearly defined sections for each type of context:

<brand_voice>: This tag holds the specific tone, vocabulary, and stylistic rules the AI must follow.<customer_persona>: Here, you detail the target audience, their specific pain points, and the emotional triggers that resonate with them.<negative_constraints>: You explicitly list what the AI must not do—like using certain jargon, making unsupported claims, or mentioning a competitor.<example_output>: This contains a gold-standard example of the exact format, length, and quality you expect.

This isn’t about making prompts longer. It’s about making them smarter. For a marketing campaign, you might create a <product_data> tag with technical specs and a <market_data> tag with a summary of recent competitor moves. This tells the AI precisely which information to use for which part of its reasoning. The output’s relevance and accuracy improve dramatically.

2. Retrieval-Augmented Generation (RAG)

If advanced structuring is the briefing doc, Retrieval-Augmented Generation (RAG) is the AI’s personal, just-in-time research library. It’s one of the most powerful techniques in our toolkit. It solves the AI’s “stale knowledge” problem once and for all.

An LLM’s knowledge is frozen at the moment its training ends. RAG breaks it out of that prison by connecting it to live, external data sources.

Here’s how it works. A customer asks your support bot about a feature you shipped last week. The base model, trained a year ago, has no idea what they’re talking about. With RAG, the system “retrieves” relevant documents from your company’s knowledge base—like the latest release notes. Then, it “augments” the AI’s context with that fresh information before generating an answer.

You’re not just asking the AI a question. You’re handing it an open-book test with the textbook already opened to the right page.

For a business, this is a complete game-changer. Imagine feeding an AI your latest sales figures from a database, real-time customer feedback from your CRM, and the top three competitor press releases—all before asking it to draft a quarterly strategy memo. The result is an analysis grounded in today’s reality, not last year’s training data.

The catch? Implementing RAG is more technical than writing prompts. You’ll need a vector database to store and search your private data efficiently. The ROI is massive. It transforms a generalist AI into a true domain expert on your business.

3. Memory Systems for Continuity

A standard LLM has the memory of Dory from Finding Nemo. Once an interaction ends, it forgets everything. This is a huge problem for any task requiring more than a single step. Memory systems solve this by providing continuity, turning one-off exchanges into a coherent workflow.

For a customer, this means the AI remembers their name, their previous support ticket, and the solutions they’ve already tried. The difference between a helpful assistant and a frustratingly repetitive bot.

In a content creation workflow, a memory system can track the key arguments made in the first three sections of an ebook. When you ask the AI to write the fourth section, it knows what’s already been covered. It builds on it without repeating itself. This is how you build an AI that doesn’t just write a paragraph but helps you execute a month-long content strategy.

4. Agentic Chaining and Workflows

This is where all the pieces click into place. Agentic Chaining is how you build a digital assembly line by linking specialized AI calls together. Instead of trying to make one giant, complex prompt do everything, you orchestrate a workflow of smaller, single-purpose AI “agents.”

Think about creating a new product landing page. An agentic workflow would look something like this:

- Researcher Agent: Takes a product name, scrapes the web for the top 5 competing landing pages, and extracts their key features, value props, and pricing.

- Copywriter Agent: Uses the researcher’s output, your brand voice guide, and a customer persona to write compelling headline and body copy.

- SEO Specialist Agent: Takes the copy, optimizes it for your target keywords, and then generates the final meta title and description.

Each agent is an expert at its one job, passing its finished work to the next specialist in the chain. Far more reliable and scalable than trying to get one AI to do everything at once. You’re no longer just prompting. You’re orchestrating a team of digital specialists to execute a business process.

This is the essence of building a truly bionic system.

How to Build a Bionic Marketing System

Enough theory. The best way to really get a handle on context engineering is to build something that makes you money. So let’s walk through a tangible, revenue-generating asset: a “bionic” marketing system for an ecommerce brand.

The mission is simple. We want to automate the creation of high-converting product description pages. This slashes the time to get a new product to market. And boosts its performance. This isn’t a thought experiment—it’s a direct path to ROI.

Step 1: Assemble Your Static Context

First, we gather the foundational pieces that rarely change. This is your Static Context, the bedrock of your brand’s digital identity. Think of it as the company’s DNA, which we need to encode for the AI.

This includes things like:

- Brand Voice Guide: Not just “friendly and professional.” We need specifics. A list of approved words, forbidden jargon, rules on sentence length, and the precise emotional tone.

- SEO Keyword Bible: Your primary and secondary keywords for the product category, plus any negative keywords we must avoid.

- Target Persona Documents: Detailed profiles of your ideal buyers—their goals, frustrations, and the exact language they use to talk about their problems.

You and I both know these documents often get created once and then buried in Google Drive. For context engineering, they become living assets, meticulously organized and ready to be fed into our system.

Step 2: Gather Dynamic, High-Signal Context

Next, we collect information that changes with every new product or market shift. This is the Dynamic Context. Its freshness is what gives your AI its competitive edge. Your rivals are using stale training data; you’re using today’s reality.

For our ecommerce example, this means grabbing:

- Raw Product Specs: The manufacturer’s data sheet—all the dimensions, materials, and features.

- Fresh Customer Reviews: The top 5-10 most recent reviews for similar products. You want both the praise and the complaints. This is pure gold.

- Competitor Product Pages: The URLs of the top 3 competing product pages. Our system will analyze these for positioning and messaging gaps.

This dynamic layer ensures the AI isn’t just writing generic copy. It’s entering a live market conversation, armed with the latest intelligence.

By feeding the AI the exact words customers use in reviews, you’re not just optimizing for keywords. You’re optimizing for resonance. That’s how you turn a browsing visitor into a paying customer.

Step 3: Design the Master Prompt Template

Now we structure all this information into a master prompt. We’ll use clear, machine-readable tags—like that XML structure I mentioned—to create a perfect briefing document. It’s not just a request; it’s a blueprint.

Generate a product description page.

[Your detailed brand voice rules here]

[Primary, secondary, and negative keywords here]

[Product data sheet here]

[Top 5 positive and negative review themes here]

This structured template gets rid of ambiguity. It tells the AI exactly what each piece of information is and how to use it, forcing it to generate output that is on-brand, on-message, and optimized for search.

Step 4: Orchestrate the Agentic Workflow

Finally, we design the workflow. Instead of one giant, clunky AI call, we chain together a series of specialized agents. Our own digital assembly line. For those interested in the nuts and bolts, my guide on marketing AI agents explains how to build these automated teams.

- The Copywriter Agent: Takes the full prompt and drafts the product description. Its main job is to turn features into benefits and directly address pain points from customer reviews.

- The Brand Editor Agent: Receives the draft and refines it only for brand voice and tone, ensuring it aligns perfectly with the static guide.

- The SEO Optimizer Agent: Takes the edited copy and generates the final SEO metadata—title tag, meta description, and image alt text.

The result? A system that can take a new product from a data sheet to a fully optimized, high-converting product page in under 15 minutes. A process that used to take a human marketer 4+ hours is now almost instantaneous.

One of my clients implemented this and saw a 12% increase in conversion rates. Why? Because the copy directly incorporated themes from their top customer reviews. That’s not hype. That’s revenue.

The Modern Tools And Stack For Context Engineering

“What tools do I actually need to build this?” It’s one of the first, and most important, questions I get from founders. The tooling landscape can feel like a chaotic mess. But we can cut through that noise by mapping tools to the jobs we need to get done.

You don’t need a dozen new subscriptions. You need the right tool for the right job, whether you’re a bootstrapper building your first AI workflow or a CMO engineering a system for the entire enterprise. Let’s break the stack down.

Retrieval And Data Infrastructure

This is the foundation of any serious context engineering work, especially if you’re using Retrieval-Augmented Generation (RAG). This layer makes your private, proprietary data available to the AI in real time.

You’ve got two main paths here:

- Vector Databases: Tools like Pinecone or Weaviate. These are specialized databases built for one thing: incredibly fast similarity searches. They turn your documents into numerical representations (vectors), letting the AI find the most relevant information almost instantly.

- Simple File Search: Let’s be brutally honest. You don’t always need a complex vector database. If your context is just a handful of well-structured documents, a simple file search inside your agentic workflow might be all you need. Don’t over-engineer it.

The trade-off is clear. Vector databases give you speed and scalability for huge, messy data sets. Simple search is faster to set up for smaller, controlled situations. My advice? Start simple. Only scale to a vector DB when retrieval speed becomes a performance bottleneck.

Orchestration Frameworks

If data is the foundation, orchestration frameworks are the plumbing and wiring. These code libraries help you connect all the different components—the LLM, your data sources, and your tools—into a coherent, multi-step workflow.

The big names you’ll hear are LangChain and LlamaIndex. They provide the building blocks for creating the agentic chains I described earlier. Think of them as toolkits that save you from writing thousands of lines of code.

A critical warning: these frameworks are a double-edged sword. LangChain is fantastic for whipping up a prototype in a weekend. But for a mission-critical production system, their layers of abstraction can create performance bottlenecks or make debugging an absolute nightmare.

For a serious, enterprise-grade system, many teams I work with end up building their own lightweight, custom framework. It gives them granular control, cuts down latency, and makes the whole system more transparent and reliable.

The Brains And The Observability

At the center sits the Large Language Model (LLM) itself. The foundation of many modern AI applications for content creation, crucial for context engineering, lies in the best LLM models available. Your choice of model is a key decision, but remember the model is only one piece of the puzzle.

Just as important is your observability platform. Tools like LangSmith or Weights & Biases are becoming non-negotiable. When a complex agentic chain fails, you have to be able to trace every single step. What data was retrieved, what was in the prompt, what the LLM decided. Without that visibility, you’re flying blind.

The entire AI market, now valued at $390.91 billion, is driven by this push for practical integration. For marketers, automating workflows where natural language processing is enhanced by context engineering is a huge opportunity, with that specific market segment projected to grow from $42.47 billion in 2025 to $791.16 billion by 2034. You can discover more insights about these market trends and their implications on YouTube. Choosing the right stack is what lets you capture a piece of that value.

Ultimately, the goal is to assemble a stack that serves your business objectives, not just to collect shiny tools. For more on specific applications and platforms, check out our guide on AI marketing automation tools that can fit into this stack.

The Cold, Hard Truth About Context Engineering

I’m a big believer in cutting through the hype, so let’s get straight to it. Context engineering is not some magical incantation you whisper to an AI. It’s hard-nosed engineering. And it comes with real trade-offs that the loudest voices in the AI space forget to mention.

This discipline forces a fundamental shift. You have to stop being a “prompter” and start becoming a “systems designer.” Suddenly, you’re not just writing instructions. You’re managing data pipelines for RAG. Debugging tangled, multi-step agentic chains. And still facing down hallucinations—which can absolutely happen even with good context. It’s a completely different class of problems.

The Cost of Complexity

Then we have to talk about the cost. We’ve moved way beyond your $20/month ChatGPT subscription. Every query to a vector database for a RAG system costs money. Every step in an agentic chain is another API call, and those bills pile up fast. Building a sophisticated context engineering system is a real investment in time and budget.

The biggest mistake I see is people over-engineering things. They get so excited about building a complex RAG system that they throw it at problems where a simple, well-structured prompt would have done the job just fine.

This is where strategy becomes everything. You don’t need an elaborate context engineering setup to get a summary of your meeting notes. That’s using a sledgehammer to crack a nut. A colossal waste of resources that will kill your ROI before you even get off the ground.

A Simple Framework for Deciding

So, when does it make sense to take the leap from basic prompting to a full-blown context engineering system? Let’s make this simple.

- Use Basic Prompts When: Your task is self-contained. It doesn’t need any outside or real-time knowledge, and the cost of getting it wrong is low. Think one-off content drafts or brainstorming.

- Invest in Context Engineering When: The task relies on your proprietary data. It demands strict consistency across many outputs. Or it’s a core part of a high-value, repeatable business process. Think automated product page creation, personalized customer support bots, or dynamic market analysis.

Admitting these trade-offs isn’t a sign of weakness. It’s the mark of a professional. It’s how you build trust and make sure you’re applying the right tool to the right problem. It’s how you sidestep costly mistakes and turn AI from a neat toy into a machine that generates revenue.

Your Top Context Engineering Questions Answered

You’ve got questions. I get it. Whenever I walk clients through this, a few key queries always pop up. Let’s tackle them head-on, no nonsense. These are the practical roadblocks that keep people from getting started, and I want to clear them for you right now.

How Is Context Engineering Different from Just Making Prompts Longer?

This is the most common rookie mistake. Just dumping more words into a prompt is almost always worse. It leads to what we call ‘context rot,’ where the model completely loses focus and performance plummets. Your AI gets confused by the noise.

Context engineering is the complete opposite. It’s about delivering structured, highly relevant, and surgically precise information.

A long, rambling prompt is like an unfocused, hour-long meeting with no agenda. A well-engineered context packet is like a tight, actionable surgical brief. One gets you fired; the other gets you results.

Do I Need to Be a Coder to Do This?

No, but your thinking has to become more strategic. A developer is essential for building robust, production-grade systems like a full RAG pipeline. But the core principles of context engineering are about strategy, not syntax.

As a marketer or business leader, your job is to be the architect of the ‘context packet.’ You define the brand voice, you curate the essential data, and you provide the gold-standard examples. You create the blueprint.

A developer then takes your blueprint and implements it. Plus, we’re seeing powerful low-code tools emerge that are rapidly bridging this gap, putting more power directly into the hands of non-technical leaders.

What’s the First Small Step I Can Take to Start?

I’ll give you a simple, powerful first step you can take today. Find one recurring task where you normally use messy, one-off prompts. Ditch them. Instead, create a ‘master prompt’ template using clear, structured tags.

Try organizing your request with simple, XML-style tags like these:

<BRAND_VOICE><TARGET_AUDIENCE><KEY_MESSAGE><EXAMPLE_POST>

Structuring your request this way forces you to think like a context engineer. This simple habit immediately starts building the muscle for more systematic thinking. A small change that delivers a big shift in output quality.